European Exascale System Interconnect and Storage

The ExaNeSt project, started on December 2015 and funded in EU H2020 research framework (call H2020-FETHPC-2014, n. 671553), is a pillar of a larger initiative that aims to demonstrate the efficient usage of low power architectures in Exascale computing platforms. ExaNeSt combines industrial and academic research expertise to design the architecture and deploy a fully functional demonstrator of an innovative system-level interconnect, distributed NVM (Non-Volatile Memory) storage and advanced cooling infrastructure for an ARM-based ExaFlops-class supercomputer.

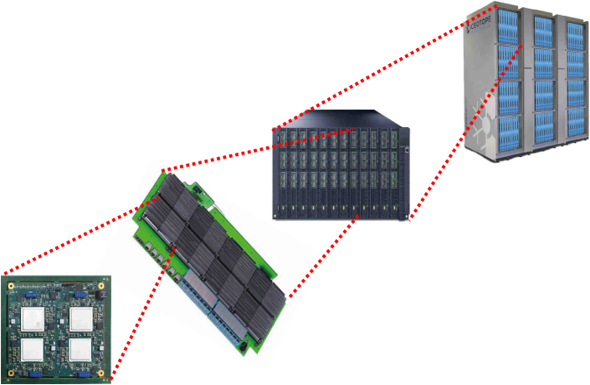

The ExaNeSt interconnect — ExaNet, shown in the Figure — is split into 3 main components:

- the Network Interface (NI), implemented close to each end-node,

- the intra-rack network IP based on APEnet architecture,

- a novel microarchitecture to be employed as Top-of-Rack switches.

The Xilinx Zynq UltraScale+ FPGA, integrating four 64-bit ARMv8 Cortex-A53hard-cores running at 1.5 GHz.

The Node is the Quad-FPGA daughterboard (QFDB), containing four Zynq Ultrascale+ FPGAs, 64 GB of DRAM and 512 GB SSD storage connected through the ExaNeSt Tier 0 network.

The first Mezzanine prototype (Track-1) enables the mechanical housing of 4 QFDBs hardwired in a ring topology with two HSS links (2 x 16 Gb/s) per edge and per direction

Nine Mezzanine will fit within an 11U (approximate height, the blade are hosted vertically) chassis. Thus, each chassis hosts 36 QFDBs, meaning 576 ARM cores and 2.3 TB of DDR4 memory.

Each Rack will host 3 chassis.

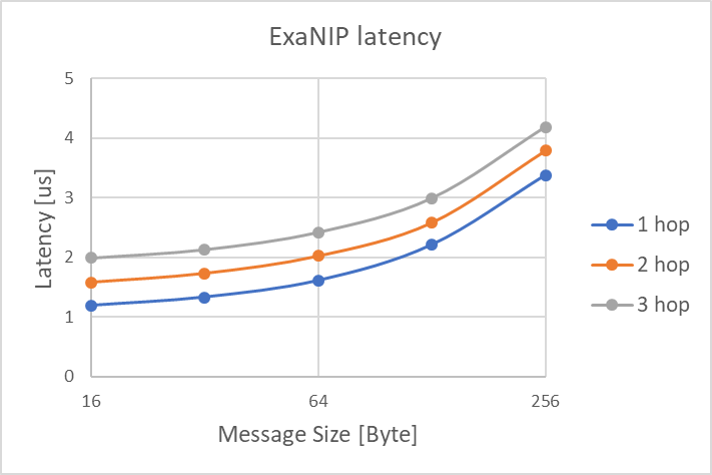

The INFN APE Research group is responsible for the ExaNet Network IP that provides switching and routing features and manages the communication over the High Speed Serial (HSS) links through different levels of the ExaNeSt interconnect hierarchy:

- the high-throughput intra-QFDB level (Tier 0) for data transmission among the four FPGAs of the ExaNeSt node;

- the intra-Mezzanine level (Tier 1) directly connecting the network FPGAs of different nodes within the same mezzanine;

- inter-Mezzanine communication level (Tier 2) managing the connectivity of the Mezzanine based on SFP+ connectors and allowing for the implementation of a direct network among QFDBs within a Chassis.

ExaNet Network IP

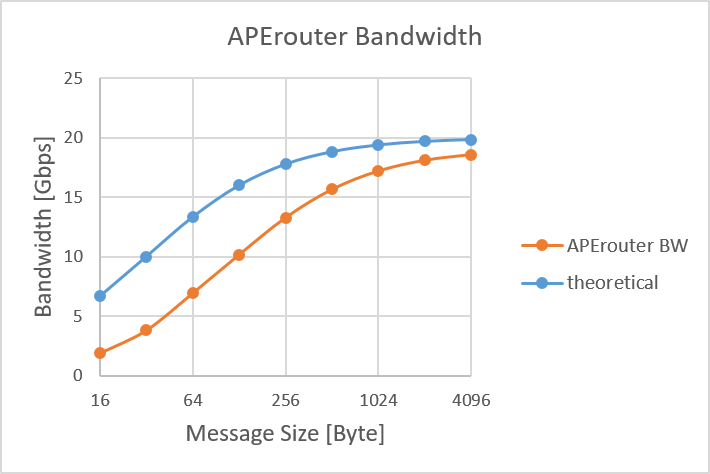

- the APErouter, handling the routing and switching mechanism of the network IP

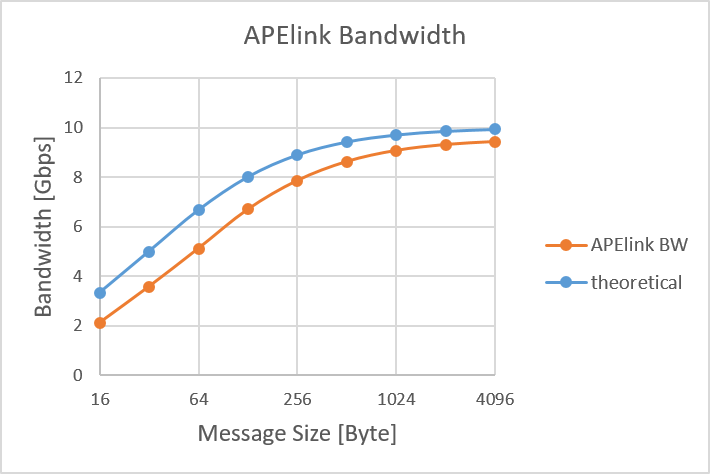

- the APElink I/O interface, managing the data transfers over the HSS links